There’s no reason why you shouldn’t be split testing every single cold email you send.

Cold email templates save you the time you’d otherwise spend writing out individual messages by hand.

But all templates are not created equal.

How can you tell whether the messages you’re sending are as effective as they could be?

The answer is A/B testing — a process that pits two versions of the same message against each other in order to determine which variation drives more desired outcomes. In the case of cold sales email, in particular, A/B testing can help you get:

- More opens

- Link click-throughs

- Replies

- And conversions

Let’s break down each of these issues, in addition to exploring the solutions that will help anyone using cold emails in their sales process to take advantage of this powerful technique.

The Benefits of A/B Split Testing

To be 100% clear, if you’re sending cold sales emails, you can benefit from A/B split testing. Just take a look at the following case studies:

- HubSpot used A/B testing to determine whether their audience responded better to emails that featured the company or a real person as the sender. Sending from a real person won, driving 0.53% more opens, 0.23% more clicks, and 131 more leads.

- An A/B test for Money Dashboard found that focusing on their business (with the subject line “Please put us out of our misery”) — rather than on recipients themselves — resulted in a 104.5% increase in opens for inactive subscribers and a 103.3% increase in clicks for active subscribers.

- Wishpond used A/B testing to test the impact of adding “You” to their subject lines in emails intended to boost sign-ups to their VIP demo. Ultimately, they found the subject line “Social Media Stats You Need to Know for 2014” resulted in 11% more opens than “Social Media Stats for 2014.”

While these aren’t exclusively examples of A/B testing on cold sales emails, they don’t need to be. What these — and the hundreds of other case studies published online — demonstrate is that split testing your sales emails can drive performance gains, no matter what you’re selling or what industry you operate in.

Here are the benefits of A/B split testing for your business:

1 Boost User Engagement

The header or subject line, graphics, call-to-action (CTA) forms and language, layout, typefaces, and colors, among many other elements, may all be A/B tested on a website, application, ad, or email. .png)

Split testing one modification at a time will reveal which impacted users’ activity or which did not. Upgrading the interface with the “winning” modifications will improve engagement, eventually optimizing it for success.

2 Reduce the Risk

By using A/B split testing, it is possible to prevent making costly and time-consuming modifications that are later found to be unproductive. Crucial changes can be well-informed, eliminating mistakes that would otherwise hold up resources for little or no return.

The most significant purpose of A/B split testing is to eliminate a potential candidate for a product. If you suspect that implementing a modification may result in a decline in conversions, don’t go ahead with it.

3 Increased Sales and Conversion Rates

Any of the previously stated advantages of A/B testing contribute to a rise in overall sales volume.

Beyond the immediate sales boost that optimized adjustments generate testing results in improved customer experience, which in turn fosters trust in the brand. This will result in more loyal, repeat consumers and more sales for the company.

A/B testing is the most straightforward and successful method of determining the most effective content for converting visits into sign-ups and sales. It is possible to convert more leads by understanding what succeeds and what does not.

What to Split Test

Having ruled out the argument that A/B split testing isn’t important, let’s look at the most common response to YesWare’s survey: “It’s valuable, but I don’t know what to test.”

Determining what to test in your cold emails can be challenging — but not necessarily for the reasons you expect.

Far from having nothing to test, marketers face a seemingly endless number of options. That can make moving forward more paralyzing than if you had nothing to test at all.

That said, just because you can test everything doesn’t mean you should.

Instead, I recommend starting with tests on three key areas:

- Subject line

- Opening line

- Your call-to-action (CTA)

1 Subject Line

Since your subject line is the first thing prospects see in your inbox, it makes sense to start here, as improvements can translate directly into more opens (and then, potentially, more clicks, replies, and conversions).

Review the best practices covered extensively online and choose one to test. For instance, an article on Hubspot suggests that, with “40% of emails being opened on mobile-first, we recommend using subject lines with fewer than 50 characters to make sure the people scanning your emails read the entire subject line.”

Personalizing your email subject line is widely regarded as a highly efficient method of capturing your prospect’s interest.

A subject line that shouts, “yes, it’s about you,” will give the prospect a huge motivation to click and read the message. However, we should not be taken at face value. Make a split testing and see how it goes.

Incorporating your prospect’s name into the subject line of an email does not necessarily constitute personalization. Attempt to include their company’s name, their role/position, or a specific event/conference in their geographic location.

Don’t overthink it, though. Test Hubspot’s wisdom for yourself by shortening the subject line of one of your existing templates and A/B split testing the two.

For more ideas to test, check out the following resources:

- This Is How To Write Better Email Subject Lines That Get More Opens

- Best Practices for Email Subject Lines

- Improve Your Open Rates With These 12 Subject Line Tweaks

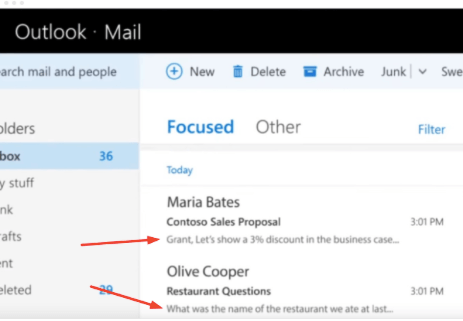

2 Opening Line

Depending on your recipients’ inbox arrangements, they may see your opening line as part of a preview before opening your message:

It’s up to you to make this opportunity count. Test different opening line variations, including:

- A question versus a statement

- A mention of a shared connection

- A personalized tip or recommendation

- One that mentions your company versus one that does not

- “Small talk” versus getting down to business

3 Your CTA

Finally, test the specific action you’re asking prospects to take. While we all know not to ask for the moon on the first contact, you may still see a major difference in performance by asking to send a proposal versus asking for a callback.

Only after you’ve tested these three items should you move on to other A/B split tests, which could include measuring the impact of:

- Your use of personalization fields

- The inclusion of imagery in your messages

- Different fonts, font sizes, or font colors

- HTML versus plain text formatting

- The length of your messages

- How frequently you email prospects

- Including a “PS” in your message

- When you schedule your messages to be delivered

It’s not that these tests aren’t important. Focus on your big wins first — especially if the limited volume of messages you send makes it difficult to reach statistical significance.

Split Testing Tools

One of the beautiful things about A/B split testing your cold emails is that you don’t need a fancy monitoring system to determine whether or not the changes you’ve made are having an impact.

If your email marketing provider offers tools that measure statistical significance, that’s great. Use them.

If your email marketing provider offers tools that measure statistical significance, use them. Share on XBut even if you don’t have access to these kinds of measurements, you can still benefit from A/B testing. According to Close.io’s Steli Efti:

“In the early stages of your startup, you will lack the data to make perfect decisions.

Overcome this hump by gathering qualitative data and making intuitive decisions based on talking to customers.”

Efti recommends picking up the phone after sending cold sales emails to gather the kinds of qualitative data he mentions in the quote above.

Asking what in your message resonated for recipients — if anything — can give you as much valuable insight for improving your campaigns as you’ll gain from running tests to statistical significance.

Ultimately, sending cold email messages to sales prospects is as much an art as it is a science.

Combine the strategies described above with gut instinct and feedback from your prospects. Continually test new messages and new templates to find your winning combination.

Conclusion

With the right split testing tools, you can gain valuable data to help your cold email campaign. Enjoy these awesome benefits of A/B Split Testing this 2021 if you’ll follow this template. Try it out yourself, and let us know what results your get!